AI Model Selection: Cost vs. Quality Trade-offs

Learn how to match AI models to task complexity, implement multi-model strategies, and cut AI costs by 5–10x without sacrificing output quality.

AI Summary

Not every problem needs GPT-4. But how do you know when to use an expensive model versus a cheaper one? And how much money are you leaving on the table by defaulting to the most powerful model for every task?

This is the multi-model deployment challenge that every AI company faces. AI model selection is the practice of matching each task in your product to the model tier whose capability and cost are both appropriate for that task. The field of AI models is expanding fast — OpenAI, Anthropic, Google, Meta, Mistral, and dozens of others release new models every month. Each has different capabilities, different price points, and different trade-offs. Keeping up is becoming a full-time job.

You can compare current model prices across providers at the AI token pricing tracker to see real-time cost data before making selection decisions.

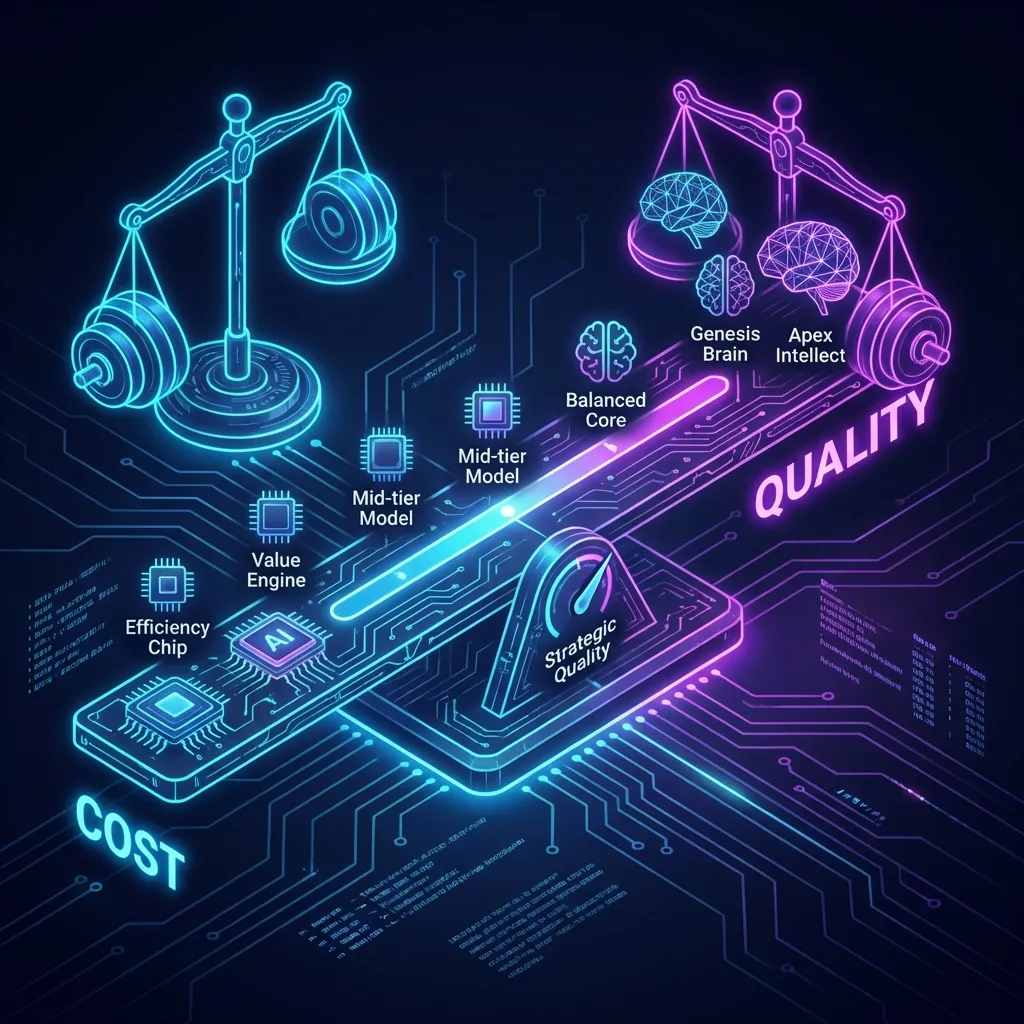

The Cost Spectrum of AI Models

AI COGS (cost of goods sold) for an AI product is dominated by token spend on model inference. As of early 2024, GPT-4 Turbo costs about $10 per million input tokens and $30 per million output tokens. Claude 3 Opus is in a similar range. These are your premium models with the best reasoning capabilities, largest context windows, and highest quality outputs.

In the middle tier, models like GPT-3.5 Turbo run around $0.50 per million input tokens and $1.50 per million output tokens — roughly 20 times cheaper than the premium tier. The quality is lower, but for many tasks it’s adequate.

Then there’s the budget tier: open-source models like Llama 2 or Mistral that you can run yourself, or smaller API models that cost pennies per million tokens. Quality drops further, but so does cost, often by 100x compared to premium models.

Companies using GPT-4 for every operation are almost certainly overpaying — possibly by a large margin. Figuring out where you can use cheaper models without degrading quality requires systematic testing and ongoing monitoring.

The Task Complexity Hierarchy

Different tasks have different complexity requirements. Some tasks need the reasoning power of a frontier model. Others don’t. The challenge is determining which is which.

Simple classification tasks are a clear example. A customer support tool that routes tickets to the right department — sales, support, billing, technical — is a classification problem, and a relatively simple one. A fine-tuned smaller model or GPT-3.5 handles it well at a fraction of the cost.

Content summarization is another case where the quality difference between GPT-4 and GPT-3.5 often doesn’t justify a 20x price premium. For most documents, the difference in nuance is marginal.

Complex reasoning tasks do benefit from premium models. A tool that analyzes complex legal documents, identifies subtle implications, and provides strategic advice needs the capability it pays for. The quality difference will be visible to users.

The Hidden Costs of Model Selection

The stated API pricing is not the only cost factor. Different models have different latency profiles. A slower model imposes a user experience cost even when its direct API cost is lower.

Context window size is another factor. In a RAG system, a model with a larger context window may be cheaper overall even at a higher per-token rate, because you can fit more context in a single call instead of making multiple calls or building more complex retrieval strategies.

Model reliability varies. Some models fail more often and require more retry logic. Some produce outputs that need more post-processing. Some have quirks that require careful prompt engineering. All of this adds development time and maintenance burden with real costs that don’t appear on API bills.

Model update velocity also matters. AI providers release new models and deprecate old ones constantly. If you’ve built your product around a specific model, you need to reassess regularly. A new model may offer similar quality at half the cost. A competitor may release a model better suited to your use case.

The Evals Problem

Smart model selection requires good evals: the ability to compare different models on your specific tasks with your specific data. Building good evals is slow, expensive work.

One engineer spent $700 running evals on just 100 test cases. That cost compounds quickly when evaluating multiple models across multiple use cases, and you need to repeat the process as new models release and your product changes.

Evals also need to be comprehensive. Measuring accuracy or quality alone is not enough. You need to measure latency, failure rates, consistency, and cost. You need to test with realistic data representing actual usage patterns, and weight factors appropriately for your product.

Companies without robust eval frameworks end up making model selection decisions on instinct. Someone tries a new model, thinks it seems good, and ships it. Later they find it’s more expensive or less reliable than what they had before.

The Multi-Model Strategy

Companies operating at scale use different models for different parts of their product, matched to the specific requirements of each task. The engineering complexity is real, but the cost savings can be large.

For example: GPT-4 for the main conversational interface where quality matters, GPT-3.5 for backend classification tasks, and a cheaper model for sentiment analysis or content moderation. Each model is matched to a task where its capabilities are appropriate and its cost is justified.

Some companies implement dynamic model routing — analyzing incoming requests in real time and routing them to the most appropriate model based on complexity. A simple question goes to a cheap model. A complex question goes to an expensive one. This maximizes cost efficiency while preserving quality where it counts.

Multi-model strategies add operational complexity: multiple API keys, multiple rate limits, multiple fallback strategies. Each model needs separate monitoring and alerting. Costs need to be tracked separately to understand each model’s contribution to overall spend.

The Price War Opportunity

The AI model market is competitive and prices are falling. If you haven’t reviewed your model choices recently, you’re probably overpaying.

In the past year alone, major providers have cut prices multiple times. GPT-3.5 Turbo got significantly cheaper. Claude added more pricing tiers. Open-source models improved substantially. Model selection decisions made a year ago and left unchanged are likely leaving money on the table.

Good cost monitoring is essential here. You need to know exactly how much you’re spending on each model for each use case. When a new model releases or prices change, you can calculate quickly whether switching makes financial sense. Without that visibility, you’re flying blind.

The Quality-Cost Trade-off

The hardest part of model selection is defining what “works” means for each task. How much quality degradation is acceptable? Who decides?

This requires collaboration between engineering, product, and finance. Engineers can assess the technical capabilities of different models. Product can define user expectations and minimum quality thresholds. Finance can establish budget constraints and margin targets. All three perspectives are necessary.

Letting any single team drive model selection leads to predictable failure modes. Engineers optimize for quality without considering cost. Finance optimizes for cost without considering user experience. Product chases features without considering either. Good outcomes come from balanced decision-making across all three.

Building a Model Selection Framework

Start by cataloging all the AI operations in your product. For each one, assess the complexity, quality requirements, volume, and user expectations.

Map out the available models and their characteristics — not just pricing, but latency, reliability, context window size, and capabilities. Use the OpenAI pricing calculator or the Anthropic pricing calculator to model per-task costs across tiers before finalizing your routing strategy. This gives you a decision matrix to match tasks to models systematically rather than arbitrarily.

Test rigorously before making changes. Run comprehensive evals including edge cases. Monitor closely after deployment. Set alerts for quality degradation or increased error rates. Be prepared to roll back.

Model selection should be an ongoing process, not a one-time decision. The guide to choosing the right usage metric covers how to align your model routing decisions with the value metric you charge customers — a connection many teams miss. Set up quarterly reviews where you reassess model choices against cost trends, quality metrics, and new releases. Companies that do this systematically operate at significantly lower AI costs than those that set it and forget it.

The Competitive Advantage

Getting model selection right is a competitive advantage. Companies that deliver the same quality at lower cost can either capture the margin improvement or pass savings to customers and undercut competitors.

That advantage only comes from treating model selection as a strategic capability — investing in evals, monitoring, and operational complexity, and giving it ongoing attention as the field evolves. It also requires breaking down silos between teams so everyone understands the trade-offs.

Most companies still aren’t doing this well. The market is immature, the tooling is primitive, and best practices are still forming. Companies that invest in this capability now will operate at a lower cost structure than competitors, and that advantage compounds over time.