FinOps for AI: Why Cloud Methods Fall Short

Why traditional cloud FinOps frameworks fail for AI workloads and how to build a specialized AI FinOps practice to optimize complex, usage-based costs.

AI Summary

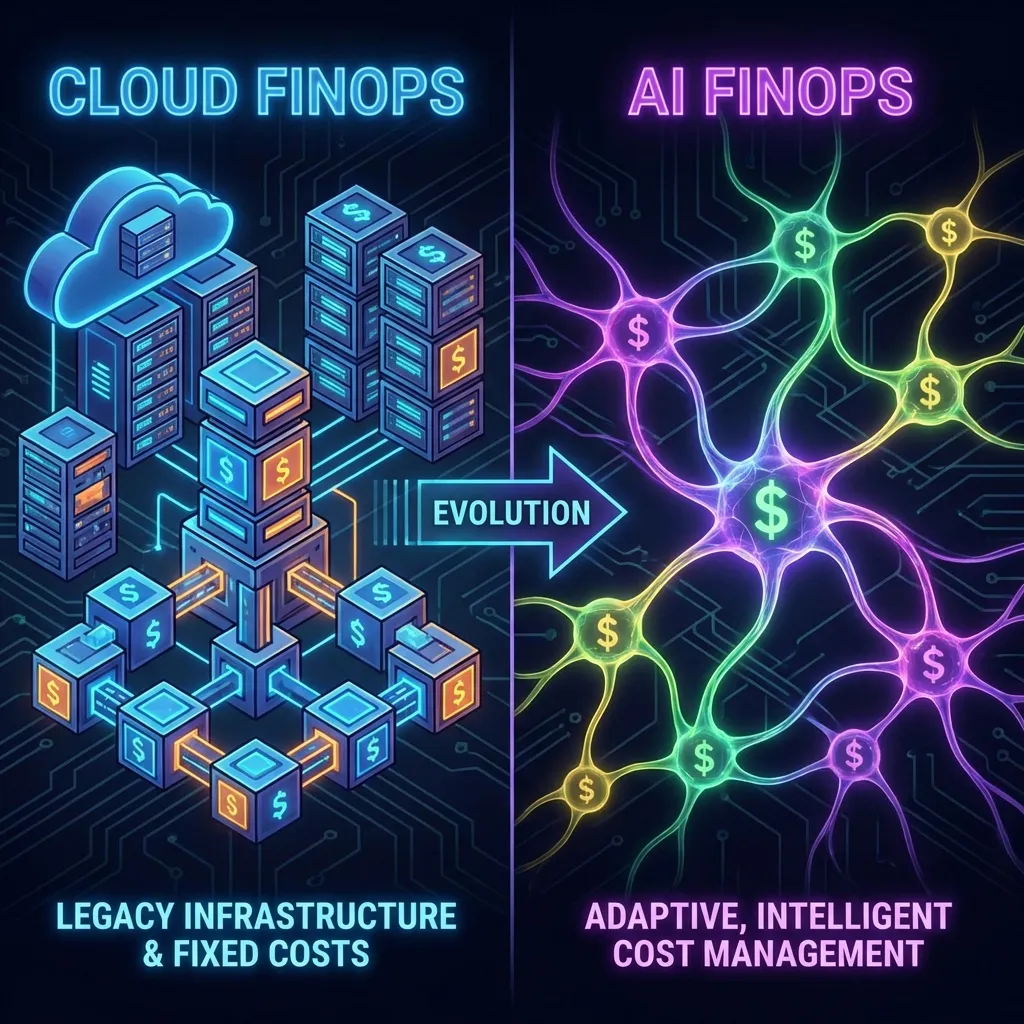

FinOps worked well for cloud infrastructure. Companies built sophisticated practices around optimizing AWS costs, rightsizing instances, and managing reserved capacity. Those same companies are now discovering that their FinOps playbook doesn’t translate to AI workloads. The assumptions are different, the cost drivers are different, and the optimization strategies are different.

This is creating a new category: FinOps for AI. Close enough to traditional FinOps that the name makes sense, but different enough that applying the same approaches leads to blind spots, inefficiencies, and margin problems.

Why Cloud FinOps Doesn’t Work for AI

Traditional cloud FinOps focuses on infrastructure optimization. You examine your compute instances, storage volumes, and database configurations. You find underutilized resources and rightsize them. You negotiate volume discounts. You use reserved instances to lock in lower rates. The discipline is about getting more efficient with infrastructure spending.

AI costs don’t work this way. There’s infrastructure underneath, but that’s not where most of the cost variability comes from. AI costs are driven by usage patterns, prompt engineering choices, model selection, and application architecture — different cost drivers that require different optimization approaches.

A cloud FinOps team might look at your AI spending and suggest moving to cheaper compute instances or negotiating better rates with your AI provider. That misses the point. The real optimization opportunities are in how you’re using AI, not in the infrastructure you use to access it. Are you using expensive models where cheaper ones would work? Are your prompts inefficient? Are you caching results? These questions don’t fit the traditional FinOps framework.

The Usage-Based Nature of AI Costs

Cloud infrastructure costs are mostly capacity-based. You provision resources and pay for that capacity whether you use it or not. Optimization means matching your provisioned capacity to your actual needs, avoiding waste.

AI costs are usage-based. You pay per API call, per token processed, per inference request. There’s no unused capacity to optimize away. Every dollar spent represents actual usage. That changes the optimization game entirely.

Instead of rightsizing capacity, you need to optimize usage patterns. Instead of negotiating volume discounts on infrastructure, you need to reduce the volume of expensive operations. Instead of turning off unused resources, you need to make your used resources more efficient.

Traditional FinOps metrics don’t apply well either. Utilization rate and idle resource cost don’t mean anything for API-based AI services. You need metrics around cost per transaction, cost per user, and cost per feature — tracking the relationship between usage and value, not the relationship between spending and capacity.

The Multi-Model Complexity

Cloud FinOps handles a relatively stable set of services. AWS, Azure, GCP. Services change gradually. Pricing is relatively predictable. Once you’ve optimized your cloud spend, it tends to stay optimized unless your usage patterns change dramatically.

AI is the opposite. New models release constantly. Pricing changes frequently. A model that was the best choice last month might be obsolete this month. Providers introduce new tiers, new pricing models, new capabilities. The situation is in constant flux.

This requires a different approach to financial governance. You can’t optimize once and move on. You need continuous monitoring and regular reevaluation, processes to assess new models as they release, and tracking of pricing changes across multiple providers.

Most companies use multiple AI providers — OpenAI for some workloads, Anthropic for others, open-source models for specific use cases. Track and compare current rates at the AI token pricing tracker. Each provider has different pricing, different capabilities, different strengths. Managing costs across this heterogeneous environment is far more complex than managing costs within a single cloud provider.

The Lack of Built-In Tools

Cloud providers have invested heavily in cost management tools. AWS has Cost Explorer, billing alerts, and detailed tagging. Azure and GCP have similar capabilities. These tools aren’t perfect, but they give you a foundation for understanding and managing your spending.

AI providers are earlier in their maturity. Most don’t provide sophisticated cost management tools. Use the OpenAI pricing calculator to model cost scenarios before committing to a workload. OpenAI provides basic usage dashboards and Anthropic provides consumption data, but these are primitive compared to cloud provider tools. Detailed analytics, forecasting, anomaly detection, and optimization recommendations are largely absent.

Companies end up building their own tooling or using third-party solutions. Most don’t have the resources to build sophisticated cost management tools from scratch, and the third-party market is still immature. LLM ops platforms exist but focus more on performance and quality than cost management.

The result: most companies are managing AI costs with spreadsheets and manual processes. They export usage data, join it with pricing information, and try to make sense of it. As AI spending grows, this manual approach breaks down.

The Organizational Challenge

Traditional FinOps often lives in a dedicated team or within finance. They work with engineering to implement optimizations, and ownership is clear. FinOps is responsible for managing cloud costs.

AI cost management doesn’t fit neatly into any single team. It’s not purely an infrastructure problem, so it doesn’t naturally belong to the platform team. It’s not purely a financial problem, so finance can’t own it alone. It’s tied to product decisions, so product needs to be involved. Engineering choices drive costs, so engineering needs accountability.

This creates organizational ambiguity. Who owns AI cost optimization? Who’s responsible when costs spike? Who makes decisions about trade-offs between cost and quality? In most companies, the answer is “kind of everyone and kind of no one.” That diffusion of responsibility means optimization often doesn’t happen.

Companies that handle this well create cross-functional ownership. Representatives from engineering, product, and finance meet regularly to review AI costs, make optimization decisions, and set priorities. They establish clear processes for how decisions get made and who has authority over different types of optimizations.

The Real-Time Requirement

Cloud FinOps can operate on a monthly cycle. You look at your bill at the end of the month, identify optimization opportunities, and implement changes for next month. Costs are relatively predictable.

AI costs are more volatile. A bug in your code can spike costs within hours. A new feature can drive unexpected usage within days. Power users can push costs up 10x before you notice. By the time the monthly bill arrives, significant damage may already be done.

This requires real-time or near-real-time cost visibility. You need to know today what your spending trajectory looks like this month. You need alerts when costs deviate from expected patterns. You need the ability to respond quickly before cost issues become expensive problems.

Most traditional FinOps tools and processes aren’t built for this pace. They’re designed for monthly review and optimization cycles. Adapting them to real-time AI cost management requires new capabilities, new tools, and new processes.

The Strategic Importance

Cloud infrastructure is a cost center, but usually a manageable percentage of revenue. For most SaaS companies, cloud costs are 10 to 20% of revenue.

For AI-native companies, AI costs can be 30 to 50% of revenue or more. It’s often the determining factor in whether the business model works at all. Optimizing AI costs by 20% might translate directly to 10 points of additional gross margin — the difference between a healthy business and a struggling one.

AI cost management is a strategic imperative, not an operational efficiency exercise. Companies that get good at managing AI costs will have better unit economics than competitors. They’ll be able to price more aggressively, invest more in features, and grow more sustainably.

That strategic importance means AI FinOps deserves senior leadership attention in a way cloud FinOps often doesn’t. CFOs, CTOs, and CEOs need to understand AI cost dynamics, track key metrics, and ensure the organization has the capabilities to manage these costs effectively.

Building AI FinOps Capabilities

Start with visibility. The guide to tracking and metering usage events covers the instrumentation stack in detail. Tag every API call with metadata about what it’s serving. Build dashboards that show costs by feature, by user segment, by time period. You can’t optimize what you can’t measure.

Establish governance processes. Who approves new AI features? What’s the process for evaluating new models? How do you decide when to implement rate limiting or usage caps? These decisions need clear ownership and systematic evaluation, not ad hoc reactions.

Invest in experimentation capabilities. You need to test different models, different prompt strategies, different architectures, and understand the cost implications. This requires good eval frameworks, the ability to run controlled experiments, and clear ways to measure success.

Build cost forecasting models. Understand your usage patterns and how they drive costs. Project future spending based on growth assumptions. Track leading indicators that predict cost changes before they show up in the bill. This gives you the ability to be proactive rather than reactive.

Create a culture of cost awareness. Engineers making decisions about AI implementation should understand the cost implications. Product managers launching new features should consider the unit economics. This isn’t about being cheap — it’s about being intentional about where you spend and ensuring the value justifies the cost.

The Emerging Ecosystem

An ecosystem is forming to support AI FinOps. LLM ops platforms are adding cost monitoring features. Specialized cost intelligence tools are being built for AI workloads. Cloud providers are starting to offer better visibility into AI spending.

The tools are still immature. Best practices are still forming. Companies that invest in building AI FinOps capabilities now will have a head start — developing the processes, tools, and organizational capabilities that will become standard in a few years.

Start simple but start now. Don’t wait for the perfect solution. Implement basic cost tracking. Build simple dashboards. Establish lightweight governance processes. Iterate and improve as you learn what you actually need.

AI FinOps is becoming a core capability for any company building on AI infrastructure. Companies that treat it seriously and build the right organizational structures will have a sustained advantage. Those that apply traditional FinOps approaches or ignore the problem will find escalating costs eroding their margins.